Rather than providing a technical overview of the current state of robotic exoskeleton technology or its application domains, this essay by Assoc. Prof. Barkan Uğurlu from the Faculty of Engineering presents the personal observations of a researcher who has worked in this field for twenty years. It focuses on the earliest stage of an exoskeleton prototype before it reached the outside world, the experience of the first person to interact with it, the researcher himself, and the intellectual path shaped by that encounter.

Robots are uncanny entities.

Since a summer evening in 2006 when I was testing a newly designed controller on a robot prototype, this feeling has remained deeply embedded in me. Even if I inspect every line of software and examine every hardware component in detail, the factor of uncertainty in an experiment can never be reduced to zero. Unexpected behaviors in prototypes are familiar to all engineers working in applied settings. However, the physical structure of robots — their motion and power capabilities — and the unimaginable movements they may exhibit when things go out of control make them uncanny in my eyes.

Sometimes a robotic limb suddenly swings at full speed and brushes past my face. At other times, a quadruped robot whose communication network has been disrupted charges toward me. The emergency stop button, on the other hand, tends to work only when the situation is not actually an emergency.

You cannot work with prototype robots remotely. If a robot behaves exactly as I expect, I walk up to it and try to break it. I push it, pull it, and disturb it, attempting to understand its limits. My relationship with robots is mediated through control, and it is an uncanny relationship.

After working on humanoid robots for about five years, both theoretically and experimentally, I received a job offer in 2011 from the Toyota Technological Institute. The institute was planning to invest in exoskeleton technology — a class of wearable robots — and I was expected to transfer the technologies I had developed for humanoid robots to exoskeleton systems.

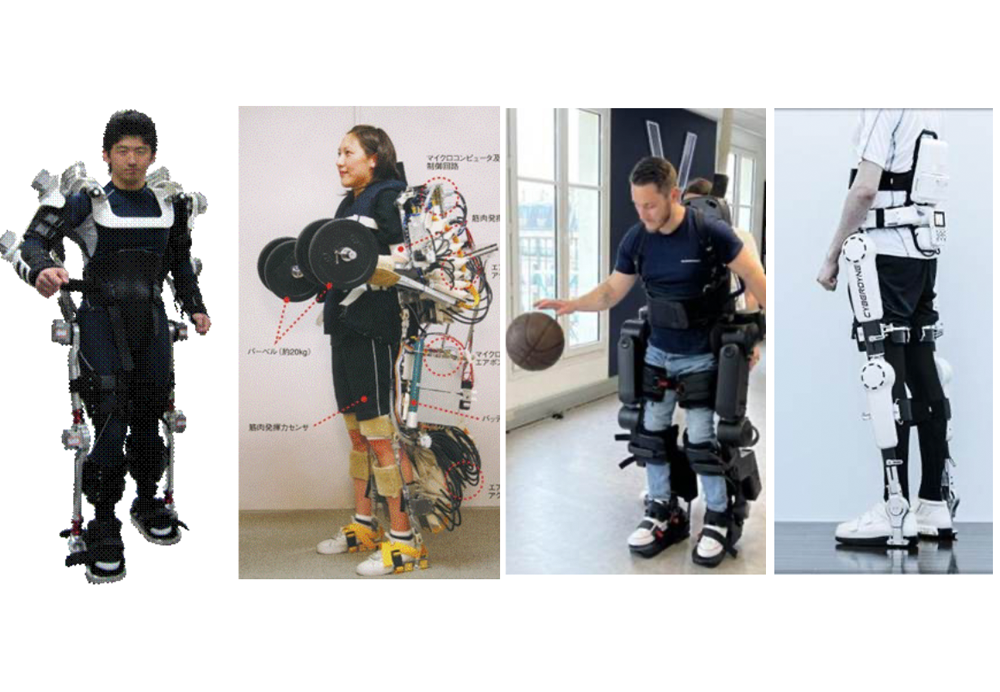

At this point, let us first define what a robotic exoskeleton is. A robotic exoskeleton is a wearable robotic platform that provides power assistance to a specific joint, a group of joints, or the entire body of the user. Various types are shown in Figure 1. While passive variants that provide no power amplification also exist, active robotic exoskeletons capable of delivering assistive torque are used particularly in rehabilitation, industrial applications requiring physical support, and — at least conceptually — in military scenarios.

The exoskeleton prototype we developed at Toyota Technological Institute, called TTI-Exo, is shown on the far left of Figure 1. As soon as the mechanical design and assembly stages were completed, I tried the robot on myself without implementing any advanced control strategy. Two clear observations emerged:

- Moving inside it was extremely difficult; even with considerable effort, my mobility was severely limited.

- When the robot was commanded externally, moving outside the boundaries of my own agency felt deeply uncomfortable.

To step inside robots that already felt uncanny even from a distance — and to enter this research field itself — was like entering the labyrinth of the Minotaur: one would either never find the way out, or emerge no longer the same person who had entered.

Technically speaking, the first sensation that disturbed me corresponds to what is known in the literature as transparency (or zero impedance). To achieve high torque capacity, robotic actuators typically incorporate high gear reduction ratios. This leads to problems such as non-backdrivability, reflected inertia, and friction. Addressing these issues required constructing a highly detailed mathematical model in both actuator and joint spaces and characterizing all friction regimes.

Once this was achieved, the actuators began to behave like ideal force sources. Internal disturbance forces were effectively compensated, and loads due to the robot’s own weight were handled via model-based compensation. The system could then provide precisely the desired amount of force at the desired timing.

Figure 1. Example exoskeleton systems. From left to right: TTI-Exo (Ugurlu et al., 2020), Power Assist Suit (Ishii et al., 2005), Atalante (Vigne et al., 2020), Cyberdyne HAL (Tsukuhara et al., 2010)

After testing my first exoskeleton controller for a while without wearing it, I chose myself as the first subject. The English phrase “taste your own medicine” echoed in my mind before the experiment, although it does not exactly capture the situation.

First, I tried the transparency mode. The robot provided no assistance, but I encountered no resistance either. I could feel the presence of a shell around my body, yet it had no weight. While the non-engineer side of me was experiencing this new sensation, the engineer within me was thinking: “OK, the sensorless torque control works.”

Then I switched to the power-assist mode and picked up a 10-kilogram dumbbell. The prototype provided support throughout the entire body. As I held the dumbbell, I was engaged in a physical interaction I had never experienced before. There was a weight in my hand, yet I could hold it for hours without fatigue. It had presence, but no weight. It had a sensation, but gravity seemed to have vanished for that object.

I moved it slightly, held it for a while, and eventually grew bored. The software and the prototype were working properly. Experiments proceeded with different subjects to avoid bias. As for me, I never felt the need to use that robot again.

After two years of work, I joined the Computational Neuroscience Laboratories at ATR in Kyoto. The group I joined was called the Brain–Robot Interface group, and as the name suggests, the goal was to establish a direct link between the human brain and a robot.

Such interfaces can be either non-invasive or invasive. In invasive interfaces, electrodes are surgically implanted in specific regions of the brain. Non-invasive interfaces, by contrast, rely on external measurements such as EEG signals recorded from the scalp. Our group therefore followed a non-invasive approach.

One line of research attempted to classify the phases of human walking using EEG signals recorded through probes placed on the scalp. In parallel with this work, an exoskeleton robot called XoR, capable of motion through artificial muscles, was developed (see Figure 2).

One line of research attempted to classify the phases of human walking using EEG signals recorded through probes placed on the scalp. In parallel with this work, an exoskeleton robot called XoR, capable of motion through artificial muscles, was developed (see Figure 2).

In Japan, where the population is rapidly aging, rehabilitation is one of the primary target areas for exoskeleton technologies. We brought the exoskeleton developed at ATR to a rehabilitation clinic and worked with therapists on the system.

Observing rehabilitation sessions in the clinic, I felt that although the robot could indeed assist users, the balance of the human-robot system may not be sufficiently stable. The exoskeleton was exposed to disturbances from both the user and the environment, and its operation was not fully safe.

This led me to the idea that an exoskeleton should be able to maintain its own balance under all conditions. I began by studying the biomechanics of human balance. Contrary to my initial assumption — and unlike what we implemented in humanoid robots — human balance is achieved less through muscular strength and more through the central nervous system regulating the mechanical impedance of muscles.

Based on this insight, I developed a bio-inspired control mechanism for the XoR exoskeleton driven by artificial muscles. Even when the robot contained only a lifeless articulated mannequin, XoR was able to maintain balance under strong disturbances by regulating the impedance of its artificial muscles in real time. It behaved like a roly-poly toy.

The XoR system developed by ATR’s Brain–Robot Interface group thus became the first self-balancing exoskeleton reported in the literature (Ugurlu et al., 2016) and was also controlled via a brain-robot interface (Noda et al., 2012).

In 2015, when I joined Özyeğin University, I set the goal of making Türkiye one of the leading countries in exoskeleton technology. The exoskeleton developed at ATR could stabilize itself only in the sagittal (front–back) plane. Shortly after I joined OzU, a proposal I submitted to the TÜBİTAK 1001 research program was accepted.

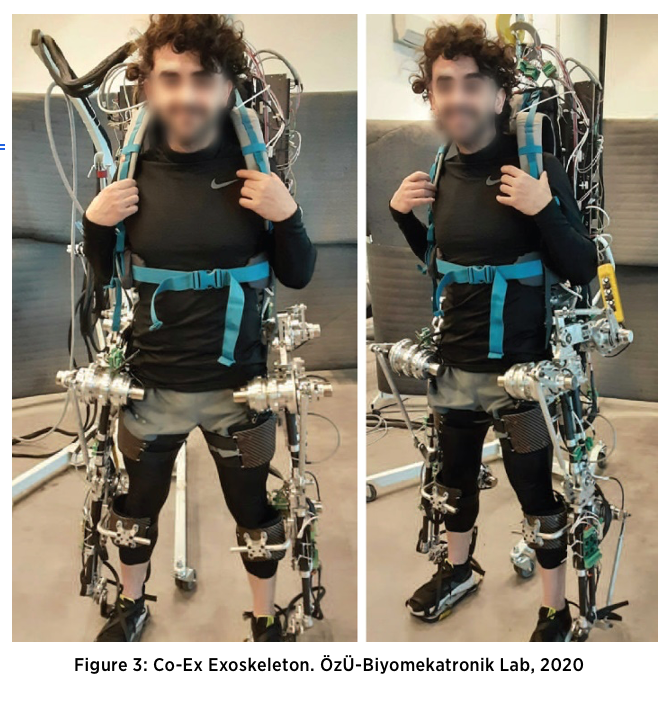

Within this project, we developed Co-Ex (Compliant Exoskeleton for Human-Robot Co-Existence; see Figure 3), an exoskeleton equipped with force-controlled custom actuators capable of maintaining balance along all axes. By 2020, we had completed all functional tests. These results were published in the literature, and the system was also protected by patents (Coruk et al., 2020; Yıldırım et al., 2026; Soliman et al., 2024).

Within this project, we developed Co-Ex (Compliant Exoskeleton for Human-Robot Co-Existence; see Figure 3), an exoskeleton equipped with force-controlled custom actuators capable of maintaining balance along all axes. By 2020, we had completed all functional tests. These results were published in the literature, and the system was also protected by patents (Coruk et al., 2020; Yıldırım et al., 2026; Soliman et al., 2024).

Research on exoskeleton systems in my laboratory continues along several evolutionary lines in collaboration with my colleagues.

Let us now set aside this twenty-year trajectory and return to the moment of my first encounter with the exoskeleton that was built at Toyota Technological Institute.

Before the projects, patents, software, mechanical designs, debates, and syntheses — there was that moment. Like all prototypes, the system had clear performance limits; it was not a science-fiction device, but a technology my colleagues in the field could easily understand.

Yet there was something uncanny in the artificial force I felt in my palm. From time to time, I still think about that moment.

The uncanny aspect was not the magnitude of the force.

It was its source.

I knew the detailed mathematical model of the entire system. Every line of the control software belonged to me. But when the load in my hand suddenly felt lighter, the question ceased to be technical:

Who was the agent of that movement?

Was I lifting that weight, or was a system working alongside me?

What makes a limb mine?

Is it merely the neural command that initiates movement, or the function that the limb performs?

If the function is decisive, at what point does the externally supplied force become mine?

In Aristotle’s theory of matter and form, the soul is the first actuality (energeia) of a natural body that potentially possess life. “If the eye were an animal,” he writes, “sight would be its soul.”

Let us imagine that we have produced an exoskeleton fully integrated with the nervous system, operating through continuous feedback and incorporated into the body schema. Suppose that on another planet, under high gravitational conditions, we cannot perform our daily activities without such an exoskeleton. Through continuous use, this artificial system becomes mapped within cortical representations as if it were a natural limb.

Now imagine that with each new generation, exoskeleton technology advances, and among newborns, those able to adapt to the exoskeleton through central nervous system plasticity gain a reproductive advantage through natural selection. Over time, this gives rise to a co-evolution. Would the humans in such a scenario still be the same humans as us?

And suppose that one day the exoskeleton, integrated into the nervous system, begins to participate in human decision-making. Would this not produce a new rational unity? Imagine reasoning, goal formation, and even normative evaluation being carried out jointly.

Would such a human-robot hybrid still belong to the same species as we do?

The artificial force I felt in my palm that day still remains somewhere in the shadowy corner of my mind, without possessing any form of its own.

“I want more life, father.”

Roy Batty, 2019 (1982)

References

Coruk, S., Soliman, A. F., Dalgic, O., Yildirim, M. C., and Ugurlu, B. (2020). Towards Crutch-Free 3-D Walking Support with the Lower Body Exoskeleton Co-Ex: Preliminary Experiments, in Proc. of the International Symposium on Wearable Robotics (WeRob).

Ishii vd. (2005). Stand-alone wearable power assist suit–development and availability. Journal of Robotics and Mechatronics, 17(5).

Noda vd. (2012). Brain-controlled exoskeleton robot for BMI rehabilitation. The 12th IEEE-RAS International Conference on Humanoid Robots.

Soliman, Ugurlu vd. (2024). Design, Development, and Control for the Self- Stabilizing Bipedal Exoskeleton Prototype Co-Ex, IEEE/ASME Transactions on Mechatronics, 30(1).

Tsukuhara vd. (2010). Sit-to-stand and stand-to-sit transfer support for complete paraplegic patients with robot suit HAL. Advanced Robotics, 24(11).

Ugurlu vd. (2016). Variable Ankle Stiffness Improves Balance Control: Experiments on a Bipedal Exoskeleton. IEEE/ASME Transactions on Mechatronics, 21(1).

Ugurlu vd. (2020). Active Compliance Control Reduces Upper Body Effort in Exoskeleton-Supported Walking. IEEE Transactions on Human-Machine Systems, 50(2).

Vigne vd. (2020). Improving Low-Level Control of the Exoskeleton Atalante in Single Support by Compensating Joint Flexibility. IEEE/RSJ IROS 2020.

Yildirim, M. C., Ugurlu, B., Sendur, P., Emre, S., Derman, M., & Coruk, S. (2026). Wearable lower extremity exoskeleton (U.S. Patent No. 12,521,298). U.S. Patent and Trademark Office. https://patents.google.com/patent/US12521298B2/en